TL;DR

- AI security tools in CI/CD pipelines analyze code or dependencies in isolation, without understanding how builds, artifacts, and runtime behavior connect, making it hard to identify which risks actually matter.

- A 2025 study of 282 security leaders found 40% of alerts go uninvestigated, largely because findings lack the context needed to determine impact or ownership.

- A Pipeline Bill of Materials (PBOM) addresses this by tracking lineage across commit, build, deployment, and runtime, linking how software is produced to where and how it runs.

- OX Security provides a unified control plane for software security, using PBOM to correlate code, pipeline, and runtime signals, so teams can prioritize exploitable risks and remediate them at the source instead of triaging disconnected alerts.

Software delivery teams now push dozens of changes per day through CI/CD pipelines, and security practitioners are facing a relentless stream of alerts tied to those changes. Recent industry research shows that security operations teams process an average of 960 alerts per day, with large organizations seeing over 3,000 daily alerts from dozens of tools and roughly 40 percent of alerts left uninvestigated because there are simply not enough analysts to keep up. Across developer and security forums, engineers evaluate AI security tools as a way out of the alert backlog, better signal, faster triage, less noise. What they find instead is a smarter presentation of the same underlying problem.

CI/CD speed and alert volume have already outpaced manual review capacity. Traditional scanners adapted with superficial intelligence still carry noise without linking their findings back to build, deployment, or execution reality. This raises a critical question for security teams: when you evaluate AI security tools for current CI/CD pipelines, what actually holds up under pressure?

Some teams are shifting away from this model, focusing on preventing risk when code is created, and then tracking how that code moves through builds, deployments, and runtime to see whether it actually becomes exposed. Instead of reacting to alerts, they aim to stop insecure patterns early and eliminate risks at their source before they propagate through the pipeline.

This article evaluates AI security tools from that perspective, not how they score issues, but whether they hold up when pipelines scale, change continuously, and reflect real execution behavior.

Why Teams are Moving to AI-Native Security Platforms

As CI/CD environments grew more complex, some security teams stopped trying to fix alert overload and started questioning the model itself. Instead of asking how to make scanners smarter, they asked whether scanning should be the decision layer at all.

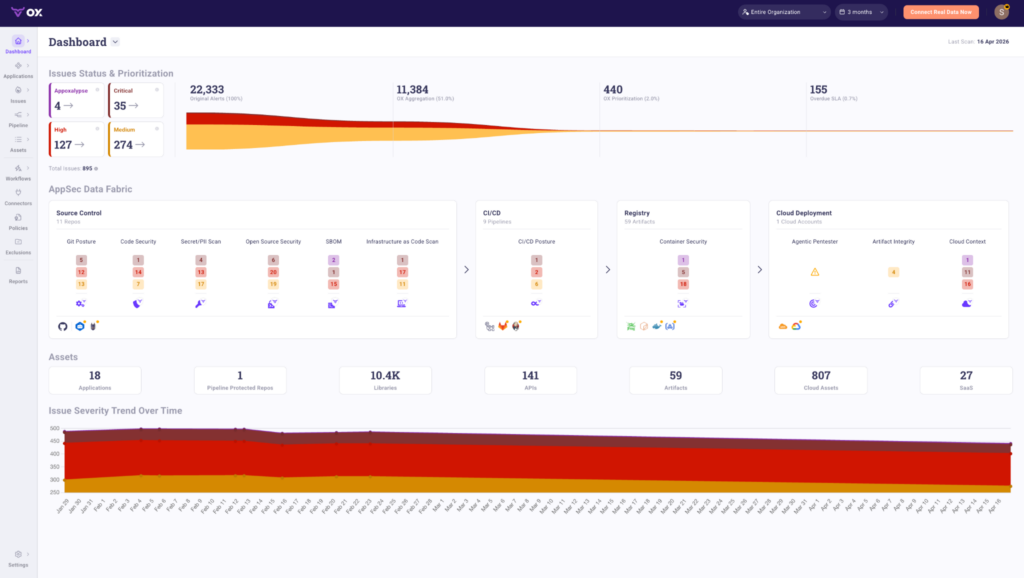

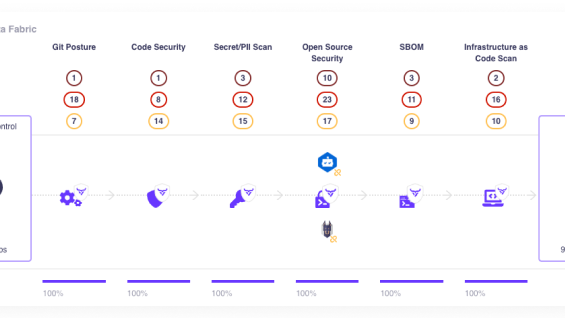

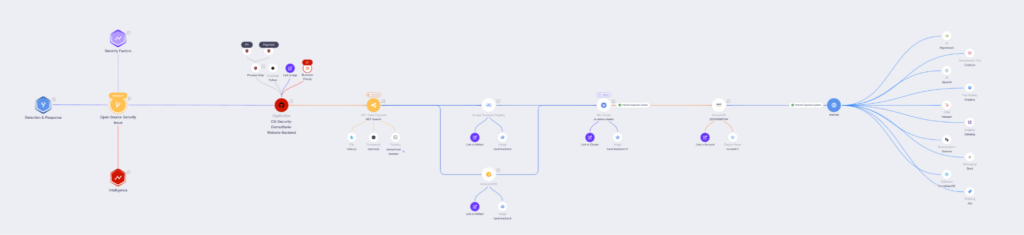

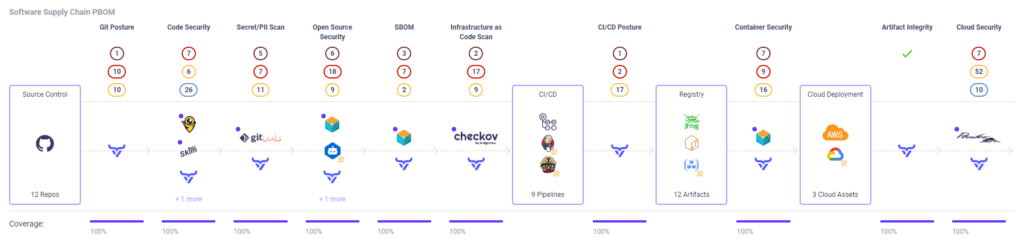

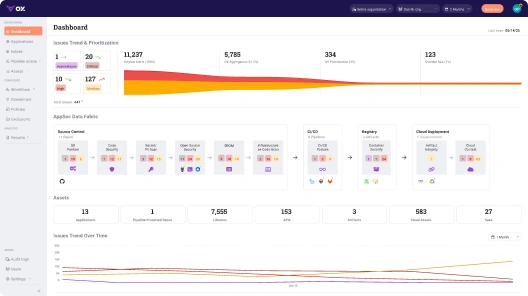

This is where platforms like OX Security enter the conversation, not as another scanner integrated onto the pipeline, but as an example of what it looks like when security posture is derived from the software supply chain itself: every repo, pipeline, artifact, and cloud deployment treated as a connected chain of evidence rather than isolated scan targets. The OX Unified Control Plane connects that chain across source control, CI/CD, registries, and cloud deployment through a single AppSec Data Fabric, with PBOM tracking every component, dependency, and configuration change in real time across all of them.

Scans, tests, and runtime signals still exist, but they feed into a posture model tied to software lineage rather than producing alerts in isolation. The result: 22,333 original alerts reduced to 440 prioritized issues, not by suppressing findings, but by evaluating risk against which artifacts are actually built, where they are deployed, and whether vulnerable paths are reachable in production.

The OX dashboard below aggregates security posture across 11 repos, 9 CI/CD pipelines, 59 artifacts, and cloud deployments, tracing every finding from source control through CI/CD, container registry, and Agentic Pentester, without leaving the same view:

Teams making this shift are not trying to reduce alert volume directly. They are trying to reason about exposure in systems where builds are short-lived, deployments are parallel, and ownership is distributed across code, pipelines, and cloud. AI assists analysis inside this model, but posture, lineage, and execution context determine what gets fixed.

Separate “AI-Powered Scanning” From Actual Application Security Posture

Most AI security tools enter organizations through a familiar door: scanning. They promise better signal by classifying issues, grouping alerts, or rewriting descriptions so teams can act faster. On paper, this sounds like progress. Inside a CI/CD pipeline, the limits are quickly.

AI-assisted scanners still observe code in isolation. They flag issues without knowing which build shipped, which deployment consumed the artifact, or whether the execution path exists at runtime. The result is faster alert generation without a corresponding increase in confidence. Engineers still ask the same questions before acting, just under tighter release deadlines.

Application security posture answers a different question. It is not “what did a scan report,” but “what software is actually running, how it got there, and where risk concentrates right now.” Without that shift, teams stay stuck correlating alerts manually across pipelines, branches, and environments.

This distinction matters because CI/CD systems do not fail due to a lack of detection. They fail when security decisions are made without lineage, ownership, and execution context. Understanding that gap is necessary before assessing why many AI security tools struggle once pipelines scale.

Why CI/CD Pipelines Invalidate Alert-First Security Models

CI/CD pipelines make more artifacts than security teams can reason about manually. Each commit can generate multiple builds, each build can reach multiple environments, and none of these artifacts exist long enough for manual correlation.

Alert-first security models assume stability. They assume a repository maps cleanly to a deployment and that a reported issue refers to a concrete, inspectable state. In CI/CD, this assumption fails. By the time an alert is reviewed, the referenced artifact is often gone or has already been replaced.

This creates repeated operational problems. The same issue appears in multiple pipelines because shared code is rebuilt independently. Teams argue about priority because the alert does not specify whether the affected path executes in production. Fixes land without confidence that the vulnerable artifact has been removed from all active deployments.

Adding AI on top of this model changes presentation, not behavior. Classification improves, summaries improve, but the underlying question remains unanswered: which running software is actually exposed right now.

When alerts are not tied to build lineage, deployment state, and execution paths, they cannot drive reliable decisions. That limitation is structural, not a matter of smarter scoring.

What to Evaluate When Assessing AI Security Tools for CI/CD Pipelines

Analysing AI security tools in CI/CD is not about feature lists or model claims. It is about whether the tool continues to function once pipelines become fast, parallel, and disposable. The easiest way to assess this is to follow a real change through a real pipeline and observe where the tool helps and where it stays silent.

Consider a common setup.

A backend team merges a pull request generated with AI assistance. The change updates a shared authentication helper used by four services. Each service has its own CI/CD pipeline. All four pipelines build container images in parallel and deploy independently to different environments.

An AI security tool scans the repository and reports a high-severity issue in the updated helper. This is where evaluation starts.

Pipeline Awareness, Not Repository Awareness

In CI/CD, security teams do not fix repositories. They fix builds. In this example, the same commit flows through four pipelines, producing four different artifacts with different build arguments and dependency graphs. A repository-level alert forces engineers to manually map which pipeline runs consumed the vulnerable code.

A usable tool identifies the exact pipeline executions where the issue entered.

This level of pipeline awareness is especially relevant in GitHub-centric workflows, where posture signals can be tied directly to build and deployment events rather than repository snapshots, as shown in this example of enhanced ASPM with GitHub pipelines. It ties the alert to specific builds and downstream deployments. Without that linkage, teams cannot tell which services are affected and which are clean, and alerts become advisory rather than actionable.

Software Lineage Across Build, Deploy, And Runtime

Later that day, two of the four services rebuild their images due to unrelated changes. The other two keep running the original artifacts. At this point, the question is no longer whether the code was vulnerable. The question is which running artifacts still contain it.

Tools that stop tracking after the initial scan lose the thread here. They continue reporting the same issue without distinguishing between rebuilt and unchanged services. Teams cannot prove that remediation removed risk from production, and confidence erodes quickly.

Lineage across build, deploy, and runtime is not optional in CI/CD. It is the only way to verify that a fix actually changed exposure.

Reachability And Exploitability In Real Execution Paths

The vulnerable helper is only executed in one of the four services, and only on an internal endpoint. Static severity alone cannot express this. An AI security tool that prioritizes based on severity forces engineers to trace execution paths manually. They inspect routing logic, check service boundaries, and decide whether the issue is reachable from outside the trust boundary.

Tools that factor in execution context can answer this directly. Tools that cannot push the decision back to humans, slowing remediation and increasing debate during triage.

Ownership And Remediation Clarity

Each service is owned by a different team. The alert must land with the team that can act. If the tool reports a single alert tied to a shared library, ownership becomes unclear. Teams discuss whether the fix should happen centrally or per service, and whether a fix in one pipeline resolves exposure elsewhere.

A useful tool maps the issue to specific services, pipeline runs, and owners. That clarity determines whether remediation happens in hours or stalls across sprints.

Behavior Under Scale And Change

The following week, the authentication helper is refactored again. Pipelines change, images are rebuilt, and environments rotate. Tools that recompute risk from scratch lose continuity. The same issue reappears under different identifiers, with no history tying it to previous remediation work. Engineers start ignoring alerts because they cannot distinguish new exposure from unresolved noise.

Tools that retain lineage and posture across change allow teams to reason about risk over time, not per scan. This is where many AI security tools begin to break down as CI/CD complexity increases.

Where AI Security Tools Break Down in Real CI/CD Environments

Once CI/CD environments reach a certain scale, weaknesses in AI security tools stop being theoretical. They top as operational friction that teams cannot work around for long.

Alert Duplication Across Parallel Pipelines

Shared libraries and services cause the same issue to top multiple times. Each pipeline emits its own alert even though the underlying cause is identical. Security teams end up deduplicating manually. They compare commits, artifacts, and timestamps to determine whether alerts represent distinct exposure or the same risk replicated across builds.

AI-based grouping may cluster alerts, but it does not identify which running artifacts still matter. Remediation effort remains one change. Alert volume grows with pipeline count.

Loss Of Context After Deployment

Many AI security tools analyze code or images during build and stop tracking afterward. Once an artifact is deployed, promoted, or rebuilt, the tool loses visibility into whether the scanned version matches what is running. Security teams are forced to assume continuity between build and runtime. In CI/CD, that assumption is often wrong.

Inability To Reason About Partial Remediation

Fixes rarely roll out uniformly. Some services redeploy immediately. Others lag due to unrelated changes or release constraints. Tools without lineage treat all these states as equivalent. Alerts close too early or remain open after exposure is removed. Teams cannot tell which services still require action. Without artifact-level continuity, remediation status becomes unreliable.

Human Effort Replaces Missing Context

As these gaps appear, people compensate. Engineers reconstruct pipeline flows manually. AppSec teams chase ownership across services. Reviews shift from code to spreadsheets and meetings. The tool still reports, but it no longer guides decisions. At this point, intelligence in alerting does not change outcomes.

Breakdown Under Sustained Velocity

As delivery speed increases, these issues compound. Alerts accumulate faster than teams can reconcile them. Historical context fragments across releases. Once engineers cannot tell what is new, what is fixed, and what is still running, alerts stop influencing behavior.

All of these failure modes share the same root cause. The tools observe software at a moment in time, but CI/CD is a continual system. To hold up under this pressure, security tooling must track software through pipelines, builds, and deployments as a single chain of custody. That is what allows teams to reason about exposure instead of alerts.

Why PBOM Is Required to Secure CI/CD at the Speed Code Now Moves

Every failure mode discussed earlier shares a structural cause. CI/CD pipelines steadily transform software, but most security tools observe it as a static object. PBOM exists to remove that mismatch by treating the pipeline itself as the source of truth.

PBOM Treats The Pipeline As The Unit Of Truth

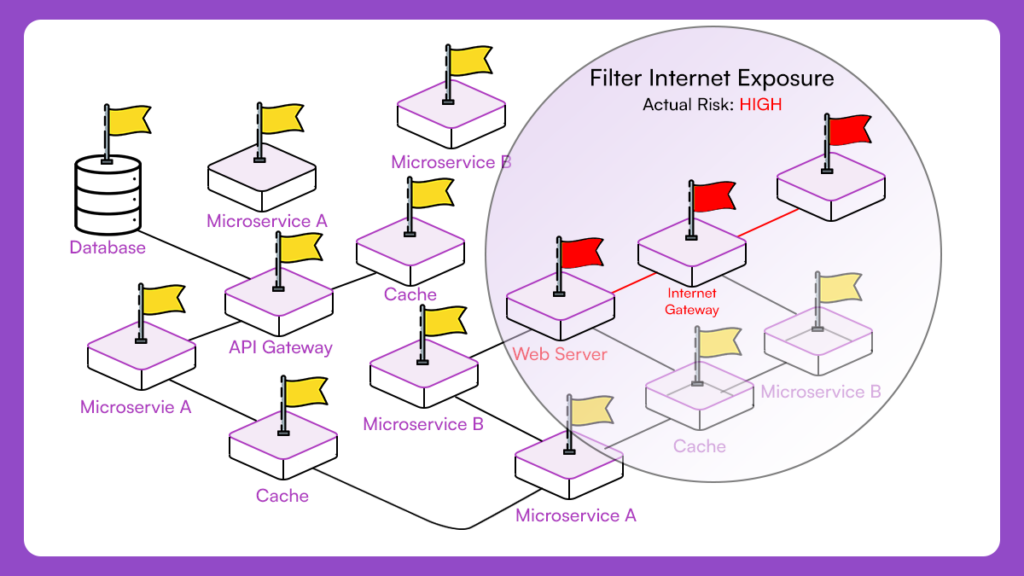

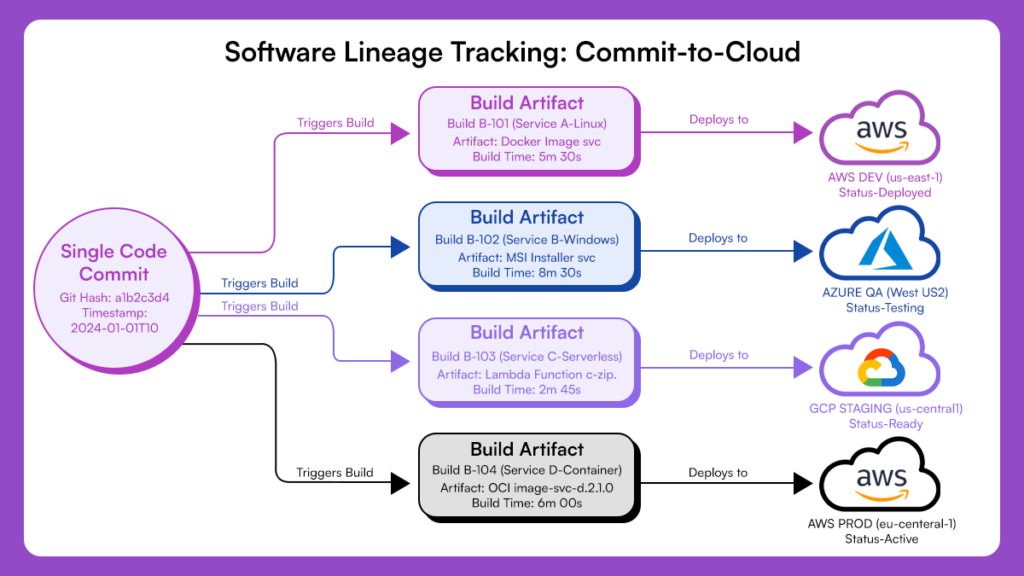

CI/CD pipelines are not just execution mechanisms. They encode how software is assembled, promoted, and released. Repository state alone cannot represent this. In the earlier example, the same commit entered four pipelines and make four distinct artifacts. Each artifact differed slightly due to build context, dependency resolution, or deployment configuration. A repository-level view collapses these distinctions and forces security teams to reason abstractly about exposure.

PBOM records the full path each artifact takes through the pipeline. This lineage-centric view aligns closely with current software supply chain security, where trust is established through traceability rather than assumptions about source code state.

It ties commits to builds, builds to deployments, and deployments to environments. This allows security teams to reason about software as it actually exists, not as it was authored. Without this, every decision is an approximation.

This illustrates the “full path each artifact takes through the pipeline” and the “lineage-centric view” described in the above paragraph.

Lineage Resolves Alert Duplication Without Human Correlation

Alert duplication is not a tooling bug. It is the natural outcome of parallel pipelines acting independently. When the same issue surfaces in multiple builds, traditional tools emit multiple alerts because they lack a shared frame of reference. Engineers then correlate manually, determining whether alerts share a root cause or represent distinct exposure.

PBOM replaces this manual reasoning with structural linkage. It makes propagation explicit. A single issue can be traced across all derived artifacts without being treated as separate problems. When remediation occurs, PBOM shows exactly which pipeline paths absorbed the fix and which did not. This is the difference between managing risk propagation and managing alert volume.

Pbom Makes Partial Remediation Visible And Trustworthy

In real CI/CD environments, remediation does not happen atomically. Some services redeploy quickly. Others wait for unrelated work to complete. Some never redeploy at all. Without lineage, tools infer remediation based on alert state or commit presence. This inference breaks down when deployment state diverges from source state. Teams close alerts prematurely or keep them open indefinitely because they cannot prove what is running.

PBOM makes remediation status a property of deployed artifacts, not alerts. Security teams can see which services still run exposed builds and which no longer do. This shifts remediation tracking from assumption to evidence.

Execution Context Requires Lineage To Be Meaningful

Reachability and exploitability analysis depend on execution context, but execution context alone is insufficient. Runtime signals without build lineage cannot tell whether the analyzed behavior corresponds to the scanned artifact. Conversely, static analysis without runtime linkage cannot determine whether vulnerable paths execute.

PBOM provides the anchor that connects these domains. It allows execution context to be evaluated against specific pipeline outputs. This grounds prioritization in reality rather than theoretical exposure. Without lineage, execution context floats independently. With lineage, it becomes actionable.

Pbom Sustains Security Reasoning As Pipelines Evolve

CI/CD systems change steadily. Pipelines are refactored. Services are split or merged. Build logic evolves. Tools that recompute posture from scratch lose continuity across these changes. Issues appear to vanish and reappear, breaking trust and forcing teams to rebuild mental context repeatedly.

PBOM preserves continuity because it tracks software through transformation, not snapshots. Security teams can reason about exposure over time even as pipelines change. This is what allows security tooling to remain useful under sustained delivery velocity.

Active ASPM as the Baseline for Analysing AI Security Tools

Once CI/CD pipelines are treated as continual systems rather than scan targets, evaluation criteria change. At that point, the question is no longer whether an AI security tool detects issues accurately, but whether it contributes to a stable security posture as software moves.

Active Application Security Posture Management (ASPM) establishes that baseline.

CI/CD Becomes The System Of Record, Not The Scanner

Active ASPM treats the CI/CD pipeline as the authoritative source for understanding software state. Scans, tests, and runtime signals are inputs, not decision-makers. This matters because no single signal reflects reality in isolation. A scan reports code characteristics. Runtime data reflects behavior under specific conditions. Tests reflect intent at a moment in time. Without a system to reconcile these against pipeline lineage, each signal competes for attention.

Active ASPM resolves this by anchoring all signals to the pipeline that build the running software. Security decisions are made against actual artifacts in actual environments, not abstract representations.

Posture Replaces Alert Triage As The Decision Top Layer

In scanner-centric models, security work revolves around alerts. Teams debate severity, deduplicate issues, and negotiate priority across findings. Active ASPM shifts the focus to posture. Instead of asking which alerts are important, teams ask which parts of the system are exposed right now.

Posture reflects cumulative risk across pipelines, environments, and deployments, grounded in lineage. This reduces the need for human arbitration. Decisions follow from system state rather than interpretation of individual alerts.

AI Assists Analysis But Does Not Drive Prioritization

AI has a role in security workflows, but its role is bounded. Within Active ASPM, AI can assist by summarizing context, correlating signals, or advancing investigation. It does not decide what matters. Prioritization is driven by posture, lineage, and execution context. This distinction prevents the system from drifting back into alert-first behavior. Intelligence supports decisions without replacing the underlying control model.

Why This Model Holds Under CI/CD Pressure

CI/CD pipelines amplify change. Tools that depend on static snapshots degrade as velocity increases. Alert volume grows, context fragments and trust erodes. Active ASPM holds because it aligns security reasoning with how software actually moves. It preserves continuity across builds, deployments, and runtime. It allows partial remediation, drift, and parallel pipelines to be reasoned about without manual reconstruction.

This is why, when analysing AI security tools for CI/CD pipelines, the category matters. Tools built around scanning struggle as pipelines scale. Systems built around posture remain stable.

Analysing AI Security Tools Through an Active ASPM Lens

| Evaluation Dimension | Typical AI Security Tools | Active ASPM Baseline |

| Unit of analysis | Repository, image, or scan result | CI/CD pipeline and build artifacts |

| Relationship to CI/CD | Integrates into pipeline steps | Treats pipeline as the system of record |

| Software lineage | Limited to commit or scan context | End-to-end across build, deploy, and runtime |

| Alert behavior | One alert per scan or pipeline | Single issue tracked across propagated artifacts |

| Remediation tracking | Inferred from alert closure | Derived from deployed artifact state |

| Handling partial fixes | Requires manual validation | Explicitly visible across pipelines |

| Reachability context | Mostly static assumptions | Evaluated against execution paths tied to artifacts |

| Ownership clarity | Often ambiguous for shared code | Mapped to specific services and pipelines |

| Behavior under scale | Alert volume increases with velocity | Posture remains stable as pipelines grow |

| Role of AI | Scores, groups, summarizes alerts | Assists analysis within posture constraints |

| Decision driver | Alert severity and confidence | Exposure and lineage-backed posture |

Conclusion: What Actually Holds Up When Release Speed Keeps Increasing

AI-written code is not slowing down. CI/CD pipelines are not becoming simpler. Human review capacity has already been exceeded in most organizations. Under these conditions, tools that analyze code snapshots in isolation will continue to generate alerts faster than teams can interpret them. Alert quality improves. Decision quality does not. The gap between detection and action widens, and alert quality improvements don’t close it.

What holds up is systems that track software through pipelines, preserve lineage across builds and deployments, and tie risk to real execution paths. That’s what keeps prioritization stable when velocity keeps climbing. Some tools sound useful in controlled demos. Fewer function when CI/CD is parallel, ephemeral, and distributed across forty teams.

)