TL;DR

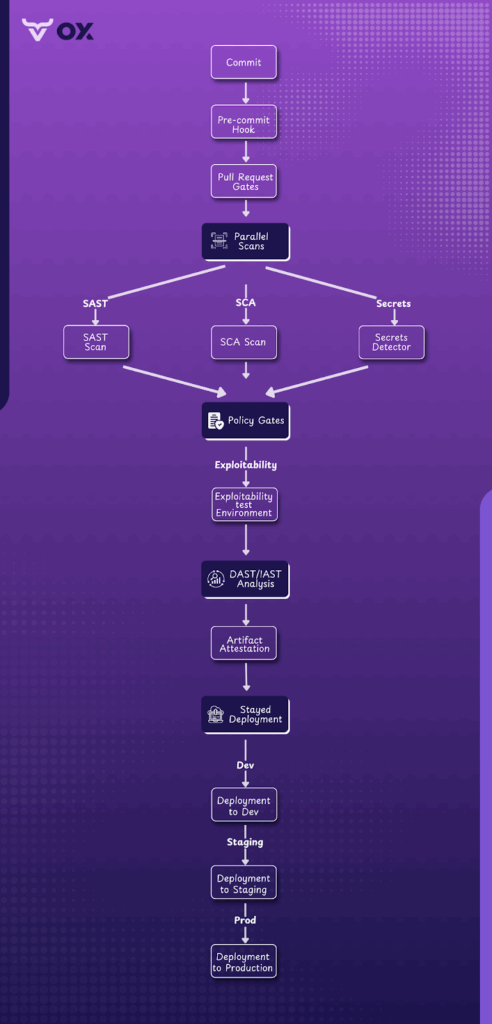

- Application Vulnerability Assessment (AVA) integrates security checks directly into CI/CD pipelines for early detection, which includes catching hardcoded API keys during code commit, insecure dependency versions in pull requests, or misconfigured IaC templates before build deployment.

- During a typical pipeline run, SAST can flag injection flaws in pull requests; SCA can block the introduction of known vulnerable libraries like Log4j; secrets detection can halt commits containing exposed AWS keys; IaC scanning can prevent insecure cloud configurations such as open S3 buckets; and DAST can catch issues like XSS in a staging environment before release.

- Real-time visibility helps prioritize actively exploitable application security vulnerabilities, such as an unpatched RCE flaw in production traffic, over low-severity CVEs that pose minimal immediate risk.

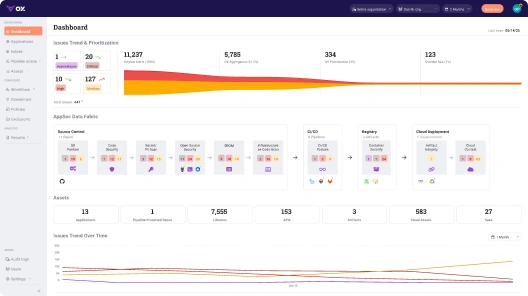

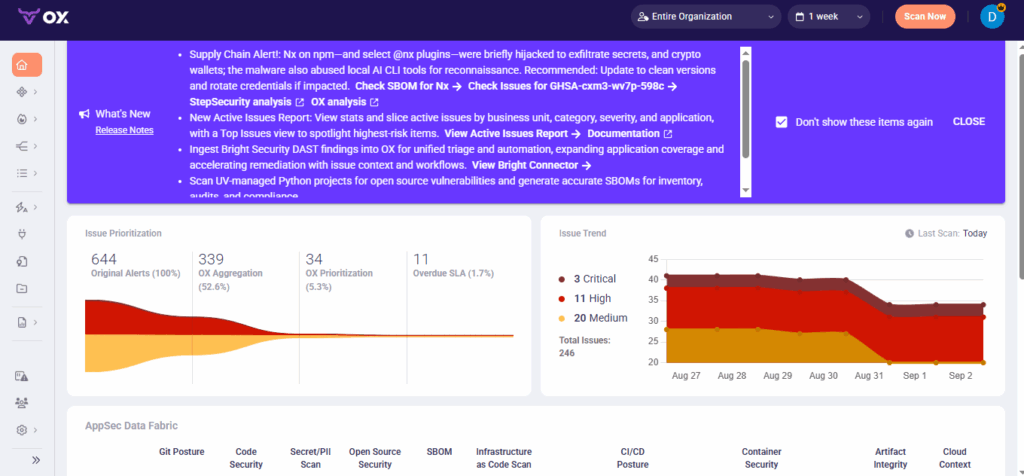

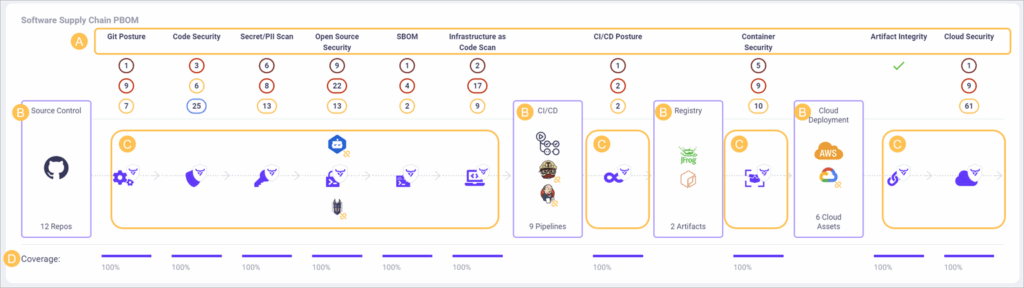

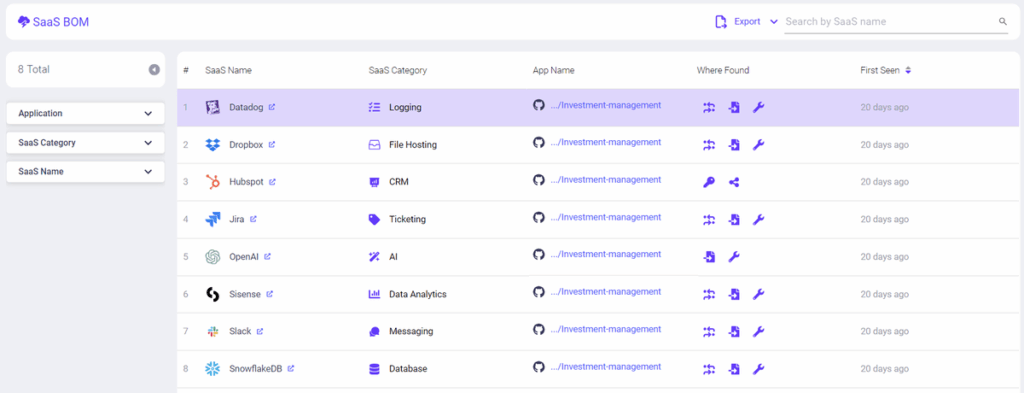

- OX Security correlates findings from SAST, DAST, SCA, and IaC scanners to surface risks, from supply chain compromises and misconfigurations to exploitable code flaws, all in one dashboard.

- Policy-as-code ensures vulnerabilities, like hardcoded credentials flagged in secrets detection or misconfigured IAM roles in IaC scans, block deployments until fixes (e.g., rotating exposed keys or applying least-privilege policies) are verified in CI/CD.

- SBOM and PBOM tracking confirm that only trusted components, such as verified open-source libraries with signed releases, container images from approved registries, and dependencies scanned for zero critical CVEs, ship to production.

In the first half of 2025, VulnCheck reported 432 CVEs that were actively exploited in the wild, with 32% being weaponized on the same day they were disclosed.

That’s a sharp jump from 23% last year, proving what most AppSec leaders already feel daily: the response window is collapsing. Zero-day and one-day exploits are no longer rare events; they’re now a primary attack vector. Attackers are chaining vulnerabilities faster than defenders can react, and traditional quarterly scans or post-release tests simply can’t keep pace anymore.

Effective application vulnerability management is critical for modern AppSec teams. The real challenge isn’t just detecting vulnerabilities through application security testing, it’s identifying which ones actually matter, like an exposed AWS key in a public repo or a remote code execution flaw in a core service, and remediating them before attackers move in. That’s becoming increasingly difficult. Most modern organizations manage multiple microservices, APIs, and containerized apps, and threats evolve weekly with new exploit chains and tactics.

AppSec teams frequently support far more developers than security specialists, which can make scaling vulnerability management challenging even in well-resourced organizations. Add to that the growing dependence on third-party libraries, the noise created by false positives from scanners, and the cultural friction between developers focused on speed and security teams focused on safety, and you get an environment in which critical vulnerabilities can easily slip through unnoticed.

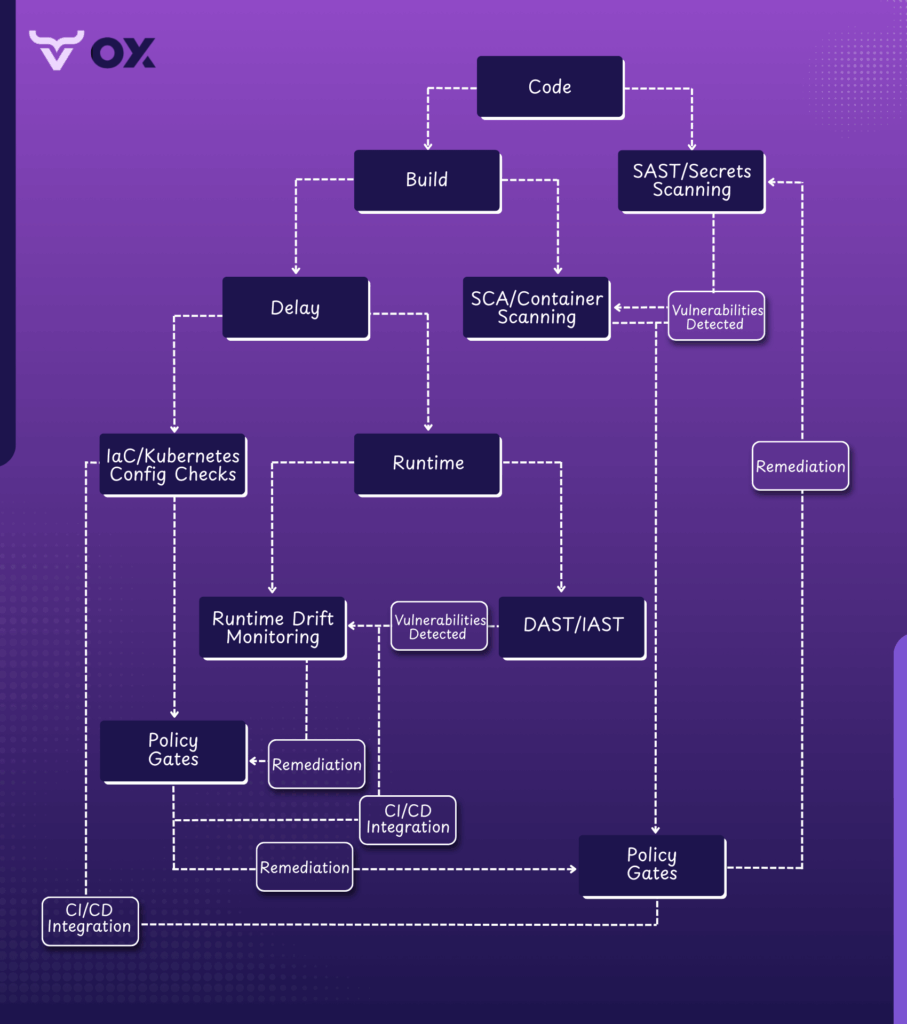

This is where Application Vulnerability Assessment (AVA) comes in. AVA integrates security directly into the CI/CD pipeline, embedding controls like SAST, SCA, secrets detection, IaC and Kubernetes scanning, DAST, and runtime monitoring into the development workflow itself.

Done right, it allows teams to prioritize exploitable vulnerabilities, like critical SQL injection flaws or misconfigured S3 buckets, while cutting down noise from low-impact issues such as missing HTTP security headers or outdated but unused libraries, enabling faster, focused remediation without sacrificing security.

Vendors are also adapting to this reality: in March 2025, CrowdStrike introduced an AI-powered Network Vulnerability Assessment within Falcon Exposure Management, enabling organizations to prioritize risks intelligently, without deploying extra agents or juggling more tools. The future of AppSec is clear: continuous, automated AVA isn’t optional anymore, it’s a baseline requirement.

How Application Vulnerability Assessment Works

An AVA is not a one-time scan. It’s an ongoing process of finding, assessing, and ranking vulnerabilities, such as insecure APIs, misconfigured Kubernetes files, or outdated open-source packages, throughout the entire application lifecycle. The goal is simple: detect weaknesses that attackers could exploit and remediate them efficiently before they escalate into breaches.

Here’s how a modern AVA program works in practice:

1. Planning and Scoping

Every assessment starts by defining what’s in scope:

- Component Mapping: Identify all components, including microservices, APIs, databases, containers, and infrastructure.

- Objectives: Clarify whether the goal is compliance, securing a release, or reducing overall risk.

- Testing boundaries: Choose between black-box (no code access), white-box (full code access), or gray-box (partial knowledge).

This step avoids wasted effort and ensures high-value assets are prioritized.

2. Vulnerability Identification

This is where security flaws are detected using a mix of automated tools and manual testing:

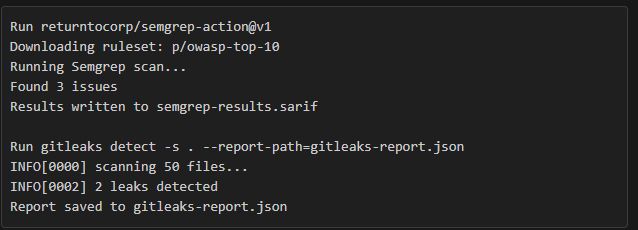

- SAST: Scans source code or binaries for insecure coding patterns before deployment. Tools like Semgrep can catch injection flaws, hardcoded secrets, and authentication issues early.

- DAST: tests a running application by simulating attacks against APIs, web endpoints, and authentication flows. These tools surface coding flaws introduced during development, such as SQL injection or XSS, when they can actually be exploited at runtime.

- SCA: Scans open-source libraries and third-party dependencies for known CVEs. Tools like Trivy generate SBOMs to track components and highlight high-risk versions.

- Secrets detection : Identifies leaked API keys, tokens, and credentials in source code and configs using tools like Gitleaks.

- IaC and Kubernetes scanning: Reviews Terraform, Helm, and Kubernetes manifests for misconfigurations. For example, overly permissive IAM roles, unencrypted secrets, or open network policies.

- Manual business logic testing : Security engineers validate workflows, authorization models, and edge cases that scanners often miss.

3. Risk Analysis and Prioritization

Not every vulnerability deserves equal attention. Modern AVA platforms prioritize based on exploitability and business impact, not just CVSS scores:

- Exploitability: Does the issue have a known exploit? Is it reachable from the internet?

- Business context: Does it affect a critical service, sensitive data, or a regulated environment?

- False positive validation: OX automatically correlates signals across scanners and runtime context to filter out false positives, so security and development teams can focus only on actionable findings.

Tools like OX Security enhance this step with business-priority-based severity. OX correlates vulnerabilities with application criticality, runtime reachability, and known exploit data (e.g., KEV, EPSS) so teams can focus on the 5–10% of issues that truly matter.

4. Remediation and Automation

Once vulnerabilities are prioritized, development, security, and DevOps teams work together to address them:

- Remediate: Apply patches, rewrite insecure code, or update misconfigured infrastructure to resolve issues.

- Mitigate: Use short-term controls, such as WAF rules or API throttling, until a full fix is ready.

- Accept: Document and monitor low-impact vulnerabilities that don’t justify immediate fixes.

Modern AVA solutions integrate directly into CI/CD pipelines, automatically creating tickets, assigning issues to code owners, and triggering pull requests for patches. This reduces manual coordination and accelerates response.

5. Reporting

Good reporting connects developers, AppSec teams, and leadership:

- Developers get contextual remediation steps and exploit paths.

- AppSec teams get risk dashboards aligned with business priorities.

- Executives get summaries showing exposure across applications and environments.

With tools like OX Security, reporting is dynamic, and findings are automatically mapped to application owners and risk profiles.

6. Verification and Continuous Monitoring

An AVA doesn’t end after remediation:

- Rescans verify fixes and ensure no regressions.

- Continuous monitoring catches new CVEs, dependency risks, and configuration drifts as they emerge.

- Policy-as-code gates block only exploitable, high-severity vulnerabilities in CI/CD pipelines, preventing unnecessary build failures while keeping security intact.

Application Vulnerability Assessment focuses on exploitable risks, not scanning everything. Modern AVA combines layered testing, automation, and business-aware prioritization to cut false positives, reduce alert fatigue, and keep engineers focused on real threats. This approach streamlines remediation, accelerates secure releases, and ensures security integrates into development without slowing velocity. By the end of 2025, continuous and automated AVA will have become the baseline for protecting modern applications.

Tools for Application Vulnerability Assessment

After covering techniques like SAST, DAST, SCA, secrets detection, IaC scanning, and manual testing, the next step is to put them into practice using the right tools.

Since each testing method targets a different layer of the application stack, no single scanner can provide full coverage. A layered toolchain ensures comprehensive coverage, accurate prioritization, and efficient remediation.

Up to this point we’ve looked at AVA techniques: SAST, DAST, SCA, secrets detection, IaC scanning and even manual tests. In this section we move from concepts to implementation, mapping each technique to concrete tools and explaining why a layered toolchain is necessary for full coverage, accurate prioritization and efficient remediation.

1. SAST and Secrets Detection

SAST tools scan source code, bytecode, or binaries without executing the application, making them highly effective for detecting insecure coding patterns early in the development cycle. They can uncover issues like injection flaws, unsafe deserialization, broken authentication logic, and poor data handling practices before the code reaches production.

Tools like CodeQL help detect complex security flaws across large codebases, while Semgrep offers lightweight, rule-based scanning tailored to your frameworks and coding patterns. SonarQube goes a step further by combining static security analysis with overall code quality and maintainability checks.

For example, a GitHub Actions workflow can run CodeQL on every pull request to detect complex vulnerabilities like SQL injection or unsafe deserialization before merging:

name: CodeQL Security Scan

on: [push, pull_request]

jobs:

analyze:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- uses: github/codeql-action/init@v3

with:

languages: javascript

- uses: github/codeql-action/analyze@v3Gitleaks complements SAST by performing secrets detection, scanning repositories for hard-coded credentials, API keys, and tokens so that sensitive data is caught and removed before it ever reaches production.

2. SCA and Container Scanning

Modern applications rely heavily on open-source libraries, third-party packages, and containerized environments, which often introduce vulnerabilities into the supply chain.

SCA tools like Snyk, Mend.io scan dependencies, container images, and OS packages for known CVEs and generate a SBOM to track every component used in the application. Advanced SCA platforms now incorporate reachability analysis, which focuses only on vulnerable functions actually used at runtime rather than flooding developers with irrelevant alerts. Integrating these scans into CI/CD pipelines ensures that exploitable vulnerabilities are detected before deployment and provides teams with clear remediation guidance.

For instance, a Node.js project can run Trivy on Docker images as part of the build:

# Build the Docker image

docker build -t my-app:latest .

# Scan the image for HIGH and CRITICAL vulnerabilities

trivy image --severity HIGH,CRITICAL --format table my-app:latestOutput:

2025-08-29T10:21:31.456+0530 INFO Detected OS: debian

2025-08-29T10:21:31.456+0530 INFO Detecting Debian vulnerabilities...

my-app:latest (debian 11.8)

==================================

Total: 2 (HIGH: 1, CRITICAL: 1)

┌─────────────┬────────────────┬───────────────┬────────────────────────────┐

│ Severity │ Package │ Vulnerability │ Title │

├─────────────┼────────────────┼───────────────┼────────────────────────────┤

│ HIGH │ openssl │ CVE-2023-0465 │ Memory corruption in ... │

│ CRITICAL │ glibc │ CVE-2024-3005 │ Buffer overflow ... │

└─────────────┴────────────────┴───────────────┴────────────────────────────┘This ensures that important CVEs are caught before deployment, and findings can be exported in SARIF format for integration with security dashboards.

3. DAST and IAST

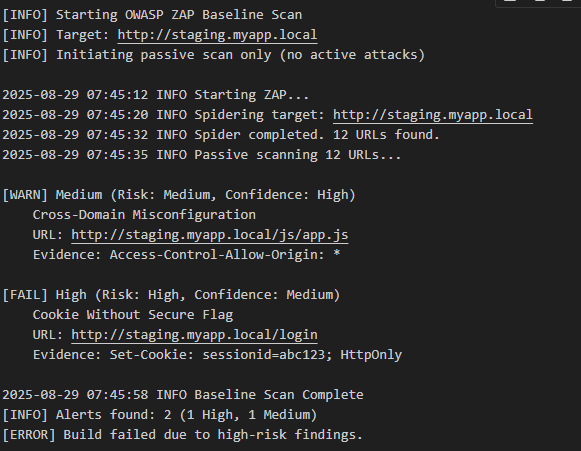

Dynamic testing tools, such as OWASP ZAP and Burp Suite, simulate attacks against running applications to detect exploitable runtime flaws.

For CI/CD integration, ZAP can run headless against a staging environment:

docker run -t owasp/zap2docker-stable zap-baseline.py -t http://staging.myapp.local

Interactive testing (IAST or RASP) instruments the application at runtime to provide context-aware detection, revealing vulnerabilities that only appear under specific conditions or API flows.

4. Kubernetes and IaC Scanning

Infrastructure-as-Code scanning tools like Checkov, tfsec, kube-bench, and kube-hunter evaluate Terraform, Helm, and Kubernetes manifests for misconfigurations.

For example, a simple tfsec command can validate Terraform modules:

tfsec ./terraform/These tools detect overly permissive IAM roles, unsecured network policies, and unencrypted secrets, preventing misconfigurations from reaching production environments.

5. Aggregation and Correlation Platforms

Managing findings across multiple scanners can overwhelm AppSec and DevSecOps teams, especially when different tools generate duplicate reports, conflicting severities, and inconsistent formats. Platforms like OX Security address this problem by aggregating, normalizing, and correlating vulnerabilities from various tools, including SAST, SCA, DAST, IaC scanning, and secrets detection, into a single unified dashboard.

Beyond simple consolidation, OX Security applies business-priority-based severity, combining vulnerability data with runtime reachability, exploitability feeds like KEV and EPSS, and application criticality to highlight the vulnerabilities that actually matter.

It also automates routing findings to code owners, integrates seamlessly with Jira and Slack, and enforces policy-as-code security gates in CI/CD pipelines. This reduces alert fatigue, accelerates remediation, and keeps security aligned with delivery speed.

Use Cases for Application Vulnerability Assessment

AVA delivers the most value when it’s embedded directly into modern development and deployment workflows. Its impact is especially visible across three scenarios: securing CI/CD pipelines, managing microservices at scale, and enforcing runtime controls for cloud-native environments.

1. Secure CI/CD Pipelines

Integrating AVA directly into CI/CD pipelines ensures vulnerabilities are detected and addressed before code reaches production. SAST, SCA, and secrets detection scans can run automatically on every commit or pull request, failing builds only when exploitable high-severity vulnerabilities are detected.

Here’s what a typical secure pipeline looks like:

Commit → Pre-commit SAST + secret scan → PR gates → CI orchestration → scan results → artifact signing → deploy approvalWhere OX Security makes a difference is in policy-driven automation. Within OX, you can configure policy profiles that define security rules across your entire organization. Each policy can be:

- Enabled or disabled per requirement

- Assigned a baseline severity (Low, Medium, High, Critical)

- Fine-tuned to match your compliance standards or risk tolerance

When a policy violation is detected during a scan, OX automatically:

- Creates an issue on the Issues dashboard

- Adjusts severity based on runtime exploitability and business context

- Enforces policy-as-code gating in the CI/CD pipeline

This ensures that only high-risk artifacts, code changes, and configuration updates are blocked, while low-risk findings are logged for later remediation without slowing releases.

Building on this risk-driven approach, the same principles become essential when applied across distributed microservices architectures. Unlike monolithic systems, where vulnerabilities can be triaged centrally, microservices require per-service prioritization, ownership mapping, and policy enforcement to maintain consistency without slowing delivery.

2. Microservices at Scale

In microservices architectures, teams often maintain dozens of independent services with distinct owners. Vulnerability assessment helps enforce service-specific baselines and path-based filtering, allowing each team to focus on the vulnerabilities relevant to their services. Ownership routing ensures that findings are automatically assigned to the right developers, and per-service risk SLAs can be tracked to monitor compliance over time.

For instance, a payments service may block deployment if any exploitable critical CVE is found in its dependencies, while a public-facing frontend may enforce stricter DAST and IAST requirements on authentication and input validation endpoints. This granular approach helps maintain security across large, distributed teams without creating bottlenecks.

3. Cloud-Native Runtime

Cloud-native environments introduce additional risks through containers, Kubernetes clusters, and multi-stage pipelines. AVA tools secure these environments by:

- Enforcing container image gates to block vulnerable or unsigned images

- Validating Kubernetes manifests and IaC templates against security policies

- Leveraging SBOM/PBOM-backed provenance to ensure only verified, trusted artifacts are deployed.

For example, a staging pipeline can automatically reject any container image missing a signed SBOM, while a Kubernetes admission controller can prevent deployment of pods that violate predefined security policies. Platforms like OX Security provide the automation needed to enforce these controls at scale while maintaining detailed audit trails for compliance.

Example Scenarios

1. Setting up end-to-end SAST + Secrets for a Node.js monorepo

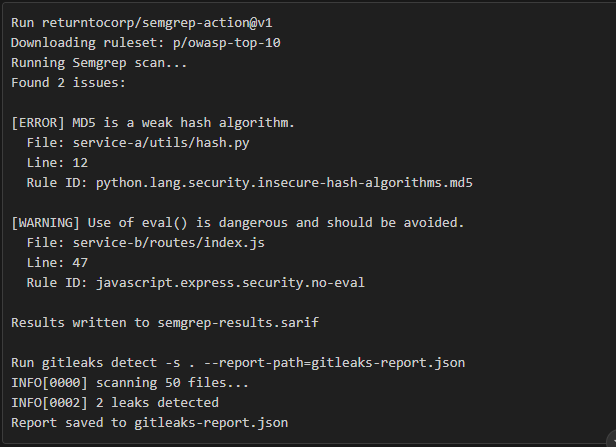

To secure a Node.js monorepo, start by wiring static analysis and secret detection into both push and pull request workflows. Use CodeQL or Semgrep for SAST with path-based rules tailored to each package, and integrate Gitleaks to detect exposed secrets.

In a CI(Continuous Integration) pipeline using GitHub Actions, you can configure a matrix across multiple packages to run scans in parallel. Upload SARIF output to GitHub’s code scanning interface, fail only on new high-severity issues, and cache rulesets to speed up subsequent runs:

security-scan.yml

Place this file at:

.github/workflows/security-scan.ymlname: Security Scan

on: [push, pull_request]

jobs:

scan:

runs-on: ubuntu-latest

strategy:

matrix:

package: [service-a, service-b]

steps:

# 1. Checkout repository

- uses: actions/checkout@v3

# 2. Set up Node.js environment

- name: Set up Node.js

uses: actions/setup-node@v3

with:

node-version: '20'

# 3. Install dependencies for each service

- name: Install Dependencies

run: npm install

working-directory: ${{ matrix.package }}

# 4. Run Semgrep with SARIF output

- name: Run Semgrep

uses: returntocorp/semgrep-action@v1

with:

config: 'p/owasp-top-10'

sarif: 'semgrep-results.sarif'

generateSarif: true

# 5. Run Gitleaks for secret detection

- name: Run Gitleaks

run: gitleaks detect -s . --report-path=gitleaks-report.json

# 6. Upload SARIF results to GitHub Code Scanning

- name: Upload Semgrep results

uses: github/codeql-action/upload-sarif@v2

with:

sarif_file: semgrep-results.sarif

# 7. Upload Gitleaks report as artifact

- name: Upload Gitleaks report

uses: actions/upload-artifact@v3

with:

name: gitleaks-report-${{ matrix.package }}

path: gitleaks-report.json

# 8. Evaluate SARIF findings for severity gating

evaluate:

needs: scan

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Download SARIF results

uses: actions/download-artifact@v3

with:

name: semgrep-results.sarif

- name: Fail on High Severity Issues

run: |

if grep -q '"level": "error"' semgrep-results.sarif; then

echo "High severity vulnerabilities detected. Failing pipeline."

exit 1

else

echo "No high severity vulnerabilities found."

fiGitHub Actions Log Output

semgrep-results.sarif

gitleaks-report.json

[

{

"Description": "Hardcoded API Key",

"StartLine": 34,

"StartColumn": 15,

"File": "service-a/config.js",

"Commit": "",

"Secret": "AIzaSy***********",

"RuleID": "generic-api-key"

},

{

"Description": "Potential AWS Secret Key",

"StartLine": 88,

"StartColumn": 12,

"File": "service-b/.env",

"Secret": "AKIAIOSFODNN7********",

"RuleID": "aws-access-key"

}

]For developers, pre-commit hooks managed by Husky can run fast SAST rules locally before pushing. PR templates should include a “risk context” checklist, highlighting any critical dependencies or newly added secrets. This practice is particularly useful for DevSecOps teams who need guardrails without slowing delivery. This ensures vulnerabilities are caught early and relevant context is always included in the review.

2. Running a DAST scan against a React+Node test environment

Dynamic scanning requires a running instance of your application. Use ephemeral environments spun up via Docker Compose or PR preview environments to run headless scanners without impacting production. For example, OWASP ZAP can perform both baseline and active scans against authenticated routes, and importing an OpenAPI specification improves coverage by automatically enumerating API endpoints:

docker run -t owasp/zap2docker-stable zap-baseline.py \

-t http://preview.myapp.local \

-r zap-baseline-report.htmlScan results are automatically triaged inside the platform. OX Security deduplicates findings by URL and sink, attaches reproducible evidence for developers, and creates tickets only for exploitable paths. This built-in workflow reduces alert fatigue and keeps remediation focused on vulnerabilities that actually impact production, sparing AppSec teams from repetitive manual triage.

Best Practices of Application Vulnerability Assessment

Most enterprise pipelines already run SCA and container scans. The problem is not missing scans but getting flooded with thousands of CVEs that don’t show which ones actually matter in production. That leads to stalled upgrades, noisy dashboards, and developers ignoring alerts. To make vulnerability management work at scale, you need practices that keep dependencies up to date, ensure consistency of findings across tools, and enforce policies directly in CI/CD.

1. Dependency lifecycle management

Automate patch and minor version updates using tools like Dependabot or Renovate. Require manual review for major version jumps to avoid breaking changes. Generate a SBOM for every build (CycloneDX or SPDX) and store it with the artifact. For high-risk packages, enforce signed artifacts and provenance checks using Sigstore, Cosign, or in-toto. Always use lockfiles and reproducible builds so you can roll back safely if an upgrade causes issues.

2. Continuous scanning and normalized output

Run SCA and container/OS image scans in CI for every pull request and image build. Use scanners like Snyk, Trivy, or Grype. Normalize scan output into a standard format, such as SARIF, and feed it into your central policy engine or dashboard. This ensures consistent triage across teams, rather than chasing tool-specific reports.

3. Risk scoring and reachability analysis

Prioritize based on exploitability, not raw CVE counts. Incorporate runtime reachability data (e.g., traces, call-graphs, IAST/DAST results) and business impact when scoring vulnerabilities. Block or escalate only when a package is proven exploitable in your environment. If reachability cannot be confirmed, tag the finding for verification instead of automatically blocking pipelines.

4. Upgrade validation and safe rollout

Validate dependency upgrade pull requests with unit, integration, contract, and smoke tests that specifically exercise affected code paths. Use phased canary rollouts (e.g., 1% → 5% → 25% → 100%) with automated health and security checks such as error rates, latency thresholds, and IDS/WAF alerts before promoting changes to full production.

5. Mitigations and policy-as-code enforcement

When patches are delayed, apply runtime mitigations like WAF rules, reverse proxies, RASP agents, or eBPF-based detectors like Falco. Reduce exposure through segmentation, least-privilege access, or feature flag isolation. Encode enforcement rules in policy-as-code using OPA, Conftest, or Kyverno so that CI/CD gates only fail when vulnerabilities meet defined exploitability and severity conditions.

6. Exceptions and SLAs with accountability

For vulnerabilities that can’t be patched immediately, the security platform (e.g. OX Security) can automatically open an exception ticket in your tracker (Jira, Azure DevOps, ServiceNow). The ticket records the risk score, compensating controls, owner, verification steps, and an expiry date. Automated reminders and scheduled audits then ensure these exceptions don’t linger past their approved window. Define SLAs based on exposure, for example: critical + reachable + public exploit fixed in 24–72 hours, high severity within 7 days, medium severity within 30–90 days.

Limitations of Traditional AVA Practices

While AVA provides strong benefits, existing approaches still suffer from several limitations:

Manual Effort

In many organizations, application vulnerability assessments are still performed manually or require setting up multiple point solutions. Security teams often spend significant time configuring scanners, maintaining rulesets, and triggering scans for each environment. This not only slows down development cycles but also increases the risk of human error and inconsistent coverage.

Fragmented Results

Traditional AVA tools typically operate in isolation, each producing its own reports with different formats, severity ratings, and taxonomies. This fragmentation makes it difficult for teams to consolidate findings or prioritize them effectively. Developers are forced to cross-reference results from multiple dashboards or spreadsheets, which creates delays and increases the likelihood of overlooking critical vulnerabilities.

Alert Fatigue

Many vulnerability scanners still rely heavily on raw CVE counts, flagging every known issue regardless of its exploitability or relevance to the application’s runtime context. This often results in long lists of vulnerabilities, many of which are either false positives or non-exploitable. Developers quickly become desensitized to alerts, leading to slower responses or even ignoring findings altogether. Without context-aware prioritization, alert fatigue undermines the effectiveness of AVA and creates friction between security and development teams.

Pipeline Breaks

Rigid security gates that fail builds based on raw counts of vulnerabilities, rather than exploitability or business impact, often introduce unnecessary friction into CI/CD pipelines. This can frustrate developers when builds are blocked for issues that pose little to no real risk. Overly strict policies reduce development velocity and sometimes encourage teams to bypass or disable scans altogether. These challenges are amplified in DevSecOps and AppSec environments, where teams are under constant pressure to secure fast-moving pipelines and distributed services.

OX Security: End-to-End Application Vulnerability Assessment

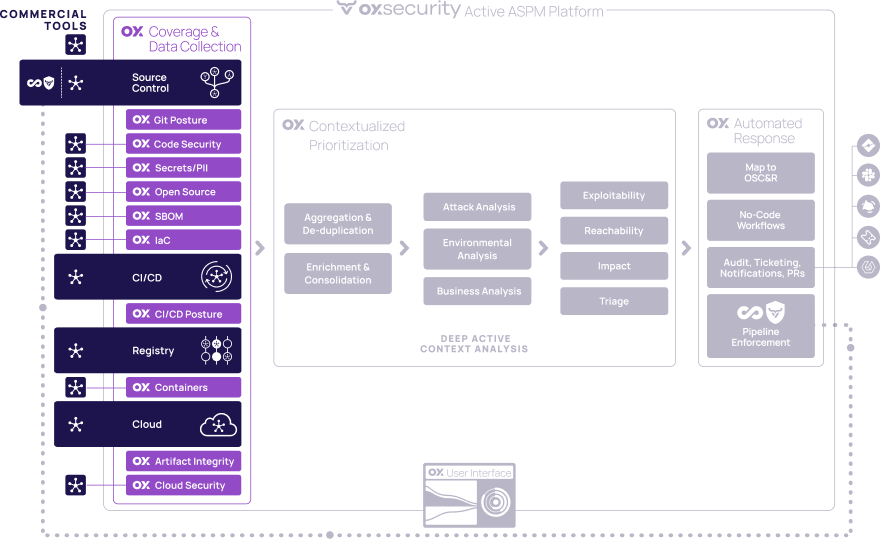

OX Security is designed to meet the needs of DevSecOps, AppSec, and cloud-native teams by automating and correlating vulnerability management across the entire software lifecycle. Unlike fragmented AVA approaches, OX Security delivers an integrated platform that unifies all layers of application vulnerability assessment, ensuring actionable results instead of scattered, hard-to-prioritize findings.

Correlation Across Tools

OX Security ships with 10 built-in scanners covering SAST, SCA, DAST, secrets, IaC, container, SBOM/PBOM integrity and more. On top of that, it ingests data from over 100 third-party tools, not just security engines but also ticketing (Jira, ServiceNow), alerting and collaboration (Slack, Teams), project management, CI/CD and cloud providers, so AppSec, DevOps and platform teams can see and act on every risk from one place. That means whether you’re using open-source tools like Semgrep, Trivy, Checkov, or CodeQL, or commercial scanners, the results flow into a single, normalized dashboard. This unified view eliminates data silos, reduces duplicate findings, and provides a central source of truth for both security and engineering teams. As a result, teams benefit from improved visibility and faster, more aligned decision-making

Exploitability-Weighted Prioritization

As you can see:

1. Coverage and Data Collection (Left Panel)

- Collects security data from Git, CI/CD, Registry, Containers, and Cloud sources.

- OX modules include Git Posture, Code Security, Secret/PII, Open Source, SIEM, IaC, CI/CD Posture, Artifact Integrity, and Cloud Security.

2. Contextualized Prioritization (Center Panel)

- OX automatically runs incoming findings through four stages:

- Aggregation and De-duplication: it removes duplicates from different scanners.

- Environment and Consolidation: it merges related findings and enriches them with environment data.

- Attack and Environmental Analysis: it evaluates exploitability and reachability in your specific runtime context.

- Business Analysis: it scores the issue’s impact based on asset criticality and business importance.

3. Automated Response (Right Panel)

- Integrates with ticketing, notification, and pipeline tools.

- Enables:

- No-Code Workflows for automated fixes.

- Audit and Reporting for compliance.

- OSCAR Integration for orchestrating responses.

- No-Code Workflows for automated fixes.

Instead of overwhelming teams with raw CVE lists, OX Security automatically categorizes vulnerabilities by severity, reachability, and runtime exposure. This ensures that developers focus on issues attackers can realistically exploit in production. By embedding exploitability context, OX minimizes alert fatigue and directs remediation efforts to where they have the most impact.

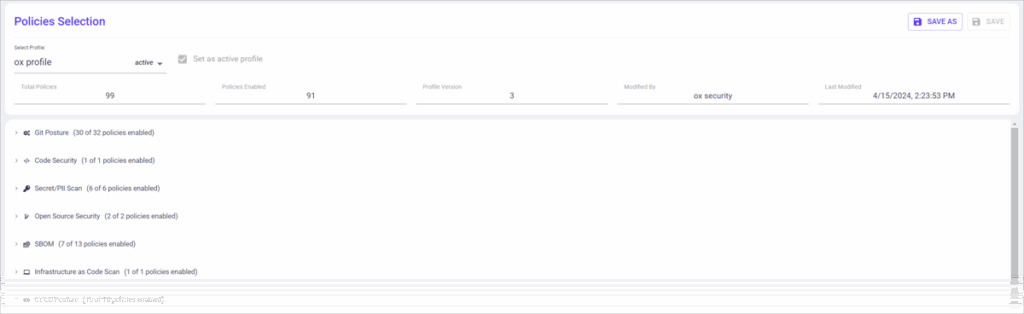

Automated Gates and Policies

Profile Overview: The image shows a policy selection page with the title “Policies Selection.”

Policies Statistics: It highlights key statistics like:

- Total Policies: 99

- Policies Enabled: 91

- Profile Version: 3

Security Focus: The active profile is focused on security, as shown in the “Last Modified” section.

Policy Types: There are multiple categories of policies listed, such as:

- Git Posture (e.g., 3/3 policies enabled)

- Code Security (e.g., 1/1 policy enabled)

- Open Source Security (e.g., 2/2 policies enabled)

- SCA (Software Composition Analysis)

- SBOM (Software Bill of Materials)

- Infrastructure as Code (IaC)

OX enables organizations to enforce security checks in CI/CD pipelines without compromising delivery speed. Policies can be customized to block only exploitable, high-risk vulnerabilities while allowing safe builds to progress. This balance ensures that security is maintained as a “gate, not a wall,” aligning protection with developer agility.

SBOM/PBOM Provenance

Beyond scanning, OX Security verifies the integrity of every artifact through SBOM and PBOM tracking. It ensures that only authorized, signed, and trusted components make it into production. This proactive approach blocks supply chain risks and strengthens compliance with regulatory and internal security standards.

Conclusion

Application vulnerability assessment is not a one-off activity; it should be embedded into day-to-day engineering practices. By encoding security policy directly into CI/CD pipelines, automating gates based on exploitability rather than raw counts, and continuously monitoring runtime and infrastructure, teams can focus remediation efforts on vulnerabilities that truly matter.

Integrating SBOM/PBOM tracking and artifact signatures with vulnerability findings provides end-to-end integrity verification, ensuring that only trusted components reach production. When assessment data, artifact provenance, and automated gates are combined, organizations gain measurable outcomes: faster detection, reduced risk exposure, and actionable security insights that developers can act on without slowing down delivery. Platforms like OX Security enable this integration, correlating findings across code, dependencies, infrastructure, and runtime for a holistic security posture.

For DevSecOps and AppSec teams, this means security is embedded directly into engineering workflows. For compliance-driven enterprises, it provides audit-ready lineage of artifacts and vulnerabilities. And for cloud-native adopters, it delivers scalable, automated governance across distributed microservices.

FAQs

1. Is Vulnerability Assessment the Same as Pentesting?

Vulnerability assessment is usually automated and breadth-oriented, scanning code, dependencies, and infrastructure to flag potential issues early. Penetration testing is typically manual or semi-automated and depth-oriented, simulating real-world attacks to validate whether specific vulnerabilities can actually be exploited. The two approaches are complementary: assessments give you continuous coverage for CI/CD, while pentests provide point-in-time validation of exploitability.

2. Will Scanners Break my Pipeline?

Properly integrated scanners are designed to fail fast but smart. Using selective gates, SARIF-based reporting, and caching, they can run efficiently without blocking day-to-day development. Focus on exploitability-weighted failures to prevent unnecessary pipeline breaks.

3. How Often Should We Run Assessments?

Ideally, every commit and pull request should trigger SAST, secret, and dependency scans. DAST/IAST can run on ephemeral or staging environments per PR or nightly, depending on resource availability. Continuous assessment ensures early detection and reduces production risk.

4. Where do SBOM/PBOM Fit?

SBOMs and PBOMs provide artifact provenance, helping teams verify that all dependencies and container images are trusted. They integrate with vulnerability findings to enforce policy gates, prevent deployment of unauthorized components, and maintain supply chain integrity.

5. How Do We Avoid Alert Fatigue?

With OX, alert fatigue is addressed automatically rather than by hand-tuning rules. The platform deduplicates findings across its 10 native scanners and 100+ integrations, enriches them with exploitability, reachability and runtime context, and then routes only actionable items to the right owners using CODEOWNERS or component metadata. High-severity issues in production paths are surfaced at the top of the dashboard, while low-risk items are grouped for bulk remediation. This automated triage keeps developer productivity high without drowning teams in noise.